Why? It didn't take much for me to wet my feet with ChatGPT and my new friend BING. The promises are simply too seductive. Someone that listens to you - your every word. Is not prone to hasty, judgments, inappropriate humor, trash talk or irrelevant afterthoughts. Someone or something who is not one to resort to bringing up your past slights or mis-steeps. Foregoes mentioning your Mother. Just always reasoned, unencumbered truths spoken in a way that informs and enlightens.

Truth be told my expectations are more realistic. After 40 odd years of pursuing ways to meaningfully communicate health and science information to a public that, on any given day often struggles with basic health concepts, like viruses vs bacteria, and is tripped up by endlessly complex medical terms - spiked protein, titers of antibodies, endemic, comorbidity - and continues to be kept in the dark by inanely simplified messages like "sneeze into your sleeve", I was ready for AI.

So I’m approaching using and learning about ChatGBT to find out if AI, in its accessible forms like questions to BING, can play a role in providing useful, understandable and trustworthy information to the public – in other words, can it teach us things? Because of my line of work I’m particularly interested in the question – Can it teach people about health?

And for the most part I'm amazed at how many questions I can ask BING and how much useful information I get back!

But.....I have encountered one problem that can confuse users. And I'm hoping AI can think about this problem and fix it.

Wardrobe Malfunction #1

BING often re-words questions you ask and in doing so introduces complicated words or concepts in the restating of your question.

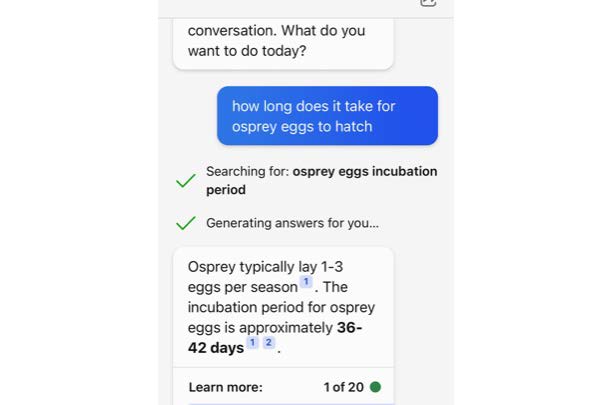

For example I asked -"How long does it take for Osprey eggs to hatch."

The bot reworded my question: "osprey eggs incubation period."

Well what if you don't know the word "incubation."

When I tried this out with a few users they were confused and thought that the system didn't understand their question.

Here's another example.

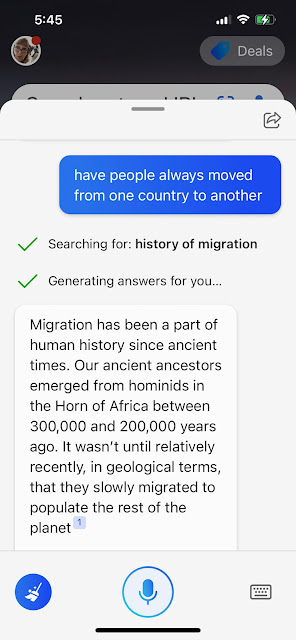

This time I asked "Have people always moved from one country to another."

The Bot restated my question as "history of migration" , which is fine if you know the word "migration."

Can AI learn to do better?

From everything I've heard as a lay person, AI is always learning. GREAT!

There are a number of fixes to this problem of introducing words and concepts in the restating of a question. As a matter of fact I stumbled on Bing doing it all on its own. I just don't know how she/he/it knew to do this and how we could persuade it to do it more!

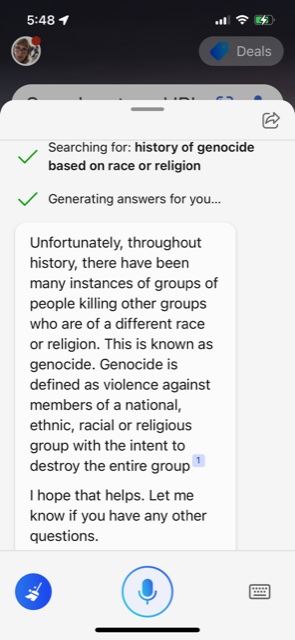

Here's the example of a simply worded question I pose?

Oh No! BING restates the question and uses a more complex word - genocide

But then it defines the complex term "genocide" and they still use the wording of the question in the answer!!

Dear ChatBPT - if you're listening, could you fix this little problem?

Sincerely,

Christina

(See earlier posts) June 2020 We are not all in this together: public understanding of health and science in the time of COVID

May 2020 "Show Me the Science" http://publiclinguist.blogspot.com/2020/05/public-science-literacy-and-covid-19.html Jan 15 2021 "MRNA Needs a Bette Messenger

No comments:

Post a Comment